This document is tested on BCM10 with Ubuntu 22.04

Preparation

1. We will start with deploying kubernetes latest version, using cm-kubernetes-setup utility, with the following components

- containerd

- calico as the network plugin

- No keyverno

- Permission manager

- No additional operators

2. Install open-iscsi in the software image

root@longhorn:~# cm-chroot-sw-img /cm/images/default-image/ root@default-image:/# apt update root@default-image:/# apt install open-iscsi

3. Disable multipathd in the software image

root@default-image:/# systemctl disable multipathd

4. Add iscsi_tcp kernel module on startup

root@longhorn:~# cat /cm/images/default-image/etc/modules # /etc/modules: kernel modules to load at boot time. # # This file contains the names of kernel modules that should be loaded # at boot time, one per line. Lines beginning with "#" are ignored. iscsi_tcp

5. Enable iscsid

root@longhorn:~# cm-chroot-sw-img /cm/images/default-image/ root@default-image:/# systemctl enable iscsid root@default-image:/# exit root@longhorn:~# pdsh -g computenode 'echo "InitiatorName=$(/sbin/iscsi-iname)" > /etc/iscsi/initiatorname.iscsi'

6. Exclude /etc/iscsi/initiatorname.iscsi in the update/syncinstall lists

root@longhorn:~# cmsh [longhorn]% category use default [longhorn->category[default]]% set excludelistsyncinstall #(add the following line; save and exit - /etc/iscsi/initiatorname.iscsi [longhorn->category*[default*]]% commit [longhorn->category[default]]% set excludelistupdate #(add the following line; save and exit - /etc/iscsi/initiatorname.iscsi [longhorn->category*[default*]]% commit

7. Reboot compute nodes

root@longhorn:~# cmsh [longhorn]% device [longhorn->device]% reboot -n node001..node003

8. Verify Longhorn prerequisites

root@longhorn:~# module load kubernetes/ root@longhorn:~# curl -sSfL https://raw.githubusercontent.com/longhorn/longhorn/v1.5.3/scripts/environment_check.sh | bash [INFO] Required dependencies 'kubectl jq mktemp sort printf' are installed. [INFO] All nodes have unique hostnames. [INFO] Waiting for longhorn-environment-check pods to become ready (0/3)... [INFO] Waiting for longhorn-environment-check pods to become ready (0/3)... [INFO] All longhorn-environment-check pods are ready (3/3). [INFO] MountPropagation is enabled [INFO] Checking kernel release... [INFO] Checking iscsid... [INFO] Checking multipathd... [INFO] Checking packages... [INFO] Checking nfs client... [INFO] Cleaning up longhorn-environment-check pods... [INFO] Cleanup completed.

Note On Compute nodes with multiple disks

By default the long horn will use /var/lib/longhorn path to store the volumes and the replicas. For compute nodes with multiple disks that can be used for longhorn volumes, we would suggest to create a software RAID with all the available disks and mount it on /var/lib/longhorn via the disksetup. If you’d like to keep using separate disks, then each disk has to be mounted separately, such as /mnt/disk1, /mnt/disk2, ..etc and then add the disks for each node from the longhorn-frontend after the initial installation longhorn.

Installing longhorn

1. Add longhorn Helm repository:

root@longhorn:~# helm repo add longhorn https://charts.longhorn.io "longhorn" has been added to your repositories root@longhorn:~# helm repo update Hang tight while we grab the latest from your chart repositories... ...Successfully got an update from the "metallb" chart repository ...Successfully got an update from the "longhorn" chart repository Update Complete. ⎈Happy Helming!⎈

2. Install longhorn Helm Chart

root@longhorn:~# helm install longhorn longhorn/longhorn --namespace longhorn-system --create-namespace --version 1.5.3 NAME: longhorn LAST DEPLOYED: Tue Nov 28 16:13:03 2023 NAMESPACE: longhorn-system STATUS: deployed REVISION: 1 TEST SUITE: None NOTES: Longhorn is now installed on the cluster! Please wait a few minutes for other Longhorn components such as CSI deployments, Engine Images, and Instance Managers to be initialized. Visit our documentation at https://longhorn.io/docs/

3. Check the status of the installed longhorn:

root@longhorn:~# kubectl get pods -n longhorn-system NAME READY STATUS RESTARTS AGE csi-attacher-f98ff75fb-46h2x 1/1 Running 0 2m20s csi-attacher-f98ff75fb-sqxc4 1/1 Running 0 2m20s csi-attacher-f98ff75fb-tvb86 1/1 Running 0 2m20s csi-provisioner-65cb5cc4ff-db46z 1/1 Running 0 2m20s csi-provisioner-65cb5cc4ff-hbxdw 1/1 Running 0 2m20s csi-provisioner-65cb5cc4ff-vl2xt 1/1 Running 0 2m20s csi-resizer-77cddbfc7c-brrx8 1/1 Running 0 2m20s csi-resizer-77cddbfc7c-d4nq7 1/1 Running 0 2m20s csi-resizer-77cddbfc7c-pqs6v 1/1 Running 0 2m20s csi-snapshotter-78cb65cc4f-9whwc 1/1 Running 0 2m20s csi-snapshotter-78cb65cc4f-nhb5c 1/1 Running 0 2m20s csi-snapshotter-78cb65cc4f-slbzn 1/1 Running 0 2m20s engine-image-ei-68f17757-9rbzp 1/1 Running 0 2m36s engine-image-ei-68f17757-hj7rw 1/1 Running 0 2m36s engine-image-ei-68f17757-qrf4t 1/1 Running 0 2m36s instance-manager-0030104d0ef614616bde207391756aa4 1/1 Running 0 2m35s instance-manager-76f7fbb4c54feea1f267aaa5dfcccac0 1/1 Running 0 2m36s instance-manager-80b6a95c946519d21e4e65142b0646f5 1/1 Running 0 2m29s longhorn-csi-plugin-859zw 3/3 Running 0 2m19s longhorn-csi-plugin-f2dtv 3/3 Running 0 2m19s longhorn-csi-plugin-h2fz2 3/3 Running 0 2m19s longhorn-driver-deployer-569566d59f-7pfbt 1/1 Running 0 2m52s longhorn-manager-t4hdp 1/1 Running 0 2m52s longhorn-manager-wcq2g 1/1 Running 0 2m52s longhorn-manager-zh48s 1/1 Running 0 2m52s longhorn-ui-6549ffb4d7-9dv28 1/1 Running 0 2m52s longhorn-ui-6549ffb4d7-xq249 1/1 Running 0 2m52s

4. Create basic authentication for longhorn frontend

root@longhorn:~# USER=root; PASSWORD=system; echo "${USER}:$(openssl passwd -stdin -apr1 <<< ${PASSWORD})" >> auth root@longhorn:~# kubectl -n longhorn-system create secret generic basic-auth --from-file=auth root@longhorn:~# cat > longhorn-ingress.yml apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: longhorn-ingress namespace: longhorn-system annotations: # type of authentication nginx.ingress.kubernetes.io/auth-type: basic # prevent the controller from redirecting (308) to HTTPS nginx.ingress.kubernetes.io/ssl-redirect: 'false' # name of the secret that contains the user/password definitions nginx.ingress.kubernetes.io/auth-secret: basic-auth # message to display with an appropriate context why the authentication is required nginx.ingress.kubernetes.io/auth-realm: 'Authentication Required ' # custom max body size for file uploading like backing image uploading nginx.ingress.kubernetes.io/proxy-body-size: 10000m spec: rules: - http: paths: - pathType: Prefix path: "/" backend: service: name: longhorn-frontend port: number: 80 root@longhorn:~# kubectl -n longhorn-system apply -f longhorn-ingress.yml ingress.networking.k8s.io/longhorn-ingress created

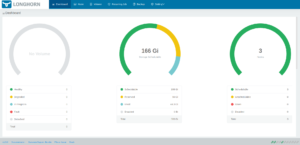

5. Access longhorn UI

a. Check the service IP of the longhorn-frontend

root@longhorn:~# kubectl -n longhorn-system get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE longhorn-admission-webhook ClusterIP 10.150.216.211 <none> 9502/TCP 5m28s longhorn-backend ClusterIP 10.150.129.12 <none> 9500/TCP 5m28s longhorn-conversion-webhook ClusterIP 10.150.37.126 <none> 9501/TCP 5m28s longhorn-engine-manager ClusterIP None <none> <none> 5m28s longhorn-frontend ClusterIP 10.150.230.71 <none> 80/TCP 5m28s longhorn-recovery-backend ClusterIP 10.150.187.90 <none> 9503/TCP 5m28s longhorn-replica-manager ClusterIP None <none> <none> 5m28s

b. Navigate to the IP of longhorn-frontend in your browser

Testing Longhorn

1. Create storage class

root@longhorn:~# kubectl create -f https://raw.githubusercontent.com/longhorn/longhorn/v1.5.3/examples/storageclass.yaml storageclass.storage.k8s.io/longhorn-test created

2. Create a Pod that uses Longhorn volumes by running this command

root@longhorn:~# kubectl create -f https://raw.githubusercontent.com/longhorn/longhorn/v1.5.3/examples/pod_with_pvc.yaml persistentvolumeclaim/longhorn-volv-pvc created pod/volume-test created root@longhorn:~# kubectl get pods NAME READY STATUS RESTARTS AGE volume-test 1/1 Running 0 37s root@longhorn:~# kubectl get pvc NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE longhorn-volv-pvc Bound pvc-db60d9fa-9ebb-41ef-911b-b79214bcca5a 2Gi RWO longhorn 59s root@longhorn:~# kubectl get pv NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE pvc-db60d9fa-9ebb-41ef-911b-b79214bcca5a 2Gi RWO Delete Bound default/longhorn-volv-pvc longhorn 59s

3. Check the mounted volume inside the pod:

root@longhorn:~# kubectl exec -it volume-test -- /bin/sh / # df -hT Filesystem Type Size Used Available Use% Mounted on overlay overlay 100.0G 13.8G 86.2G 14% / tmpfs tmpfs 64.0M 0 64.0M 0% /dev /dev/longhorn/pvc-db60d9fa-9ebb-41ef-911b-b79214bcca5a ext4 1.9G 24.0K 1.9G 0% /data /dev/vdb1 xfs 100.0G 13.8G 86.2G 14% /etc/hosts /dev/vdb1 xfs 100.0G 13.8G 86.2G 14% /dev/termination-log /dev/vdb1 xfs 100.0G 13.8G 86.2G 14% /etc/hostname /dev/vdb1 xfs 100.0G 13.8G 86.2G 14% /etc/resolv.conf shm tmpfs 64.0M 0 64.0M 0% /dev/shm tmpfs tmpfs 7.7G 12.0K 7.7G 0% /run/secrets/kubernetes.io/serviceaccount tmpfs tmpfs 3.9G 0 3.9G 0% /proc/acpi tmpfs tmpfs 64.0M 0 64.0M 0% /proc/kcore tmpfs tmpfs 64.0M 0 64.0M 0% /proc/keys tmpfs tmpfs 64.0M 0 64.0M 0% /proc/timer_list tmpfs tmpfs 3.9G 0 3.9G 0% /proc/scsi tmpfs tmpfs 3.9G 0 3.9G 0% /sys/firmware / # touch /data/adel / # ls -l /data/ total 16 -rw-r--r-- 1 root root 0 Nov 28 15:23 adel drwx------ 2 root root 16384 Nov 28 15:21 lost+found

References

https://longhorn.io/docs/1.5.3/deploy/install/

https://longhorn.io/docs/1.5.3/deploy/install/install-with-helm/

https://longhorn.io/docs/1.5.3/deploy/accessing-the-ui/

https://longhorn.io/docs/1.5.3/deploy/accessing-the-ui/longhorn-ingress/